По всем вопросам обращайтесь на: info@litportal.ru

(©) 2003-2024.

✖

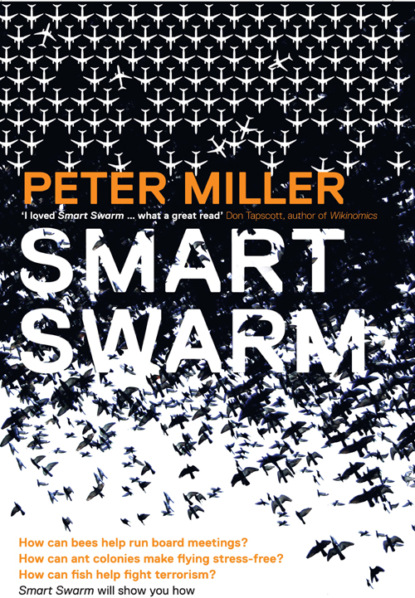

Smart Swarm: Using Animal Behaviour to Organise Our World

Автор

Год написания книги

2018

Настройки чтения

Размер шрифта

Высота строк

Поля

Ant colonies, in other words, have evolved an ingenious way to determine the shortest path between two points. Not that any of the ants are doing so on their own. None of them attempts to compare the length of the two branches independently. Instead, the colony builds the best solution as a group, one individual after another, using pheromones to “amplify” early successes in an impressive display of self-organization.

Taking this idea one step further, Deneubourg and his colleagues proposed a relatively simple mathematical model to describe this behavior. If you know how many ants have taken the shorter branch at any particular time, Deneubourg said, you can reliably calculate the probability of the next ant choosing that branch. To demonstrate this, he plugged his team’s equations into a computer simulation of the double-bridge experiment and ran it for a thousand ants. The results mirrored those of real ants. When the branches were the same length, the odds of an ant picking either one were fifty-fifty. But when one branch was twice as long as the other, the odds of picking the shorter one shot up dramatically.

The key to the colony’s system, in short, lay in the simple rules that each ant applied to local information. If you changed these rules, you would change the behavior pattern of the whole colony.

The implications of this discovery were not lost on Dorigo: If real ant colonies could find the shortest path between two points, then why couldn’t researchers do the same thing with “virtual ants”? Dorigo knew how to design software “agents” that could follow simple rules just like real ants do. Why couldn’t these software agents find the shortest path, too? Only, what if the path wasn’t the distance between an ant nest and a pile of food? What if it was the shortest route for a message across the Internet between two computers? Or the shortest distance for a package going from a factory warehouse in California to a customer in Florida? Or the shortest path between multiple steps in an industrial process? Then there was the concept of the “shortest path” itself. What if you redefined “shortest” as “most efficient” or “least costly”? Wouldn’t that be a handy tool?

“When I went back to Milan to discuss these ideas with Professor Alberto Colorni, who was supervising my work, he asked me to write a simple program as a proof of principle, to show it wasn’t just a crazy idea,” Dorigo says. At the time, Dorigo was working on a class of mathematical puzzles known as combinatorial optimization problems, which are relatively easy to describe but deceptively difficult to solve. One of the best-known examples is the traveling salesman problem, which involves the following scenario: A salesman needs to visit customers in a number of cities. What’s the shortest path he can take to visit each city once before returning back home?

When the problem involves just a few cities—let’s say, Moscow, Hong Kong, and Paris—you can figure out the answer on the back of an envelope. Leaving from the airport near his home in Cleveland, the salesman has three options for his first stop: Moscow, Hong Kong, or Paris. Let’s say he chooses Hong Kong. From there he has two choices: Moscow or Paris. Let’s say he flies to Paris. That leaves only Moscow before he can fly home. If you made a list of all the other possible sequences (such as Paris to Moscow to Hong Kong, or Moscow to Hong Kong to Paris, and so on), you would have a total of six to consider. Compare the mileage for each sequence and you have your answer.

But here’s the tricky part. If you add a fourth city to the salesman’s journey, you make the problem significantly more difficult. Now you have four times as many possible routes to consider—twenty-four instead of six. Add a fifth city and you get 120 possible routes. Jump ahead to ten cities and you’re talking about more than 3.6 million possible routes. The number of solutions, in other words, increases exponentially with each new city. When you get to thirty cities, there aren’t enough years in a lifetime to list all the possible routes.

Dorigo thought it might be interesting instead to let virtual ants give it a try. Rather than trying to identify every possible outcome of the salesman’s decision making, the ant system used trial-and-error shortcuts to find a handful of good ones. Instead of being straightforward and linear, it was decentralized and distributed. Instead of calling for complicated calculations, it relied on simple rules of thumb. Instead of getting swamped by the exponential nature of the math, it took advantage of that same snowballing effect to rapidly turn small differences into big advantages. It was different, in other words, because it harnessed self-organization in a smart way.

So Dorigo and Vittorio Maniezzo, a fellow graduate student, created a set of virtual ants capable of cooperating with one another to find the shortest route for the traveling salesman. Their secret weapon: “virtual pheromones” that the ants would leave along the way. Imagine a map with fifteen cities that a salesman needed to visit. At the beginning of the first cycle, ants were placed randomly on all of the cities. Then each ant used a formula based on probability to decide which town to visit next. This formula considered two factors: which city was closest, and which city had the strongest pheromone trail leading to it. At the start, there were no pheromone trails, so the closest cities tended to be selected. As soon as each ant completed a tour of all fifteen cities, it retraced its path, depositing virtual pheromone on its route. The shortest routes discovered by the ants tended to receive the most pheromone, while the longest ones were allowed to “evaporate” more rapidly. This enabled the ants, as a group, to remember the best routes. So when the second cycle was run, and the ants worked their way from city to city again, each ant built upon the successes of the first cycle by favoring the strongest pheromone trails. After repeating this time and again, the ants kept reducing their travel times, until the pheromone trails on the shortest segments were so strong that none of them could resist choosing them.

The results were quite encouraging.

“We discovered that the ants could find nearly optimal solutions for as many as thirty, fifty, or even a hundred cities,” Dorigo says.

Not that the ants didn’t sometimes make mistakes. If a particular ant, while hopping from city to city, happened to get caught in a loop along the way—like a twig in a river eddy—other ants occasionally followed, resulting in a nonsolution to the problem. To prevent this, Dorigo and Maniezzo instructed the ants to forget such loops when depositing pheromone on completed trails. Other problems required similar fixes. But none of the ants’ bad habits were so serious that they couldn’t be tweaked in one way or another, or made more effective by pairing them with more specialized algorithms.

“The important thing was that the ant colony optimization worked because of the implicit cooperation among many agents,” Dorigo says. “On their own, each artificial ant built a solution which was usually not very good. But together, by exchanging information—not talking to each other, but simply by exchanging information through virtual pheromones—the ants ended up finding some very good solutions.” In this way, cooperation became cumulative. Instead of representing simply the sum of the ants’ individual efforts, the search got smarter as it went forward, powered by the mechanisms of self-organization—decentralized control, distributed problem-solving, and multiple interaction between agents.

It wasn’t long before other computer scientists were adapting the ant-colony approach to solve a variety of difficult problems. A few researchers even experimented with real-world situations. At Hewlett-Packard’s lab in Bristol, England, for example, scientists created software to speed up telephone calls. Using a simulation of British Telecom’s network, they dispatched antlike agents into the system to leave pheromone-like signals at routing stations, which function as intersections for traveling messages. If a station accumulated too much digital pheromone, it meant that traffic there was too congested, and messages were routed around it. Since the pheromone evaporated over time, the system was also able to adapt to changing traffic patterns as soon as congested stations opened up again.

What did an ant-based algorithm offer that other techniques didn’t? The answer goes back to the foragers from Colony 550. If conditions in the desert changed while the ants were out searching for seeds—if something unpredictable disrupted the normal flow of events, such as a hungry lizard slurping down ants—then the colony as a whole reacted quickly: foragers raced back to the nest empty-handed; other ants didn’t go out. They didn’t wait for news about the disruption to travel up a chain of command to a manager, who evaluated the situation before issuing orders that traveled back down the chain to workers, as might happen in a human organization. The colony’s decision making was decentralized, distributed among hundreds of foragers, who responded instantly to local information. In the same way, virtual ants racing through the telephone network responded instantly to congested traffic. In both cases, an ant-based algorithm offered a flexible response to an unpredictable environment, and it did so using the principles of self-organization.

An obvious application of this approach would be to develop an algorithm—or set of algorithms—that would enable a business enterprise to respond to changes in its environment as quickly and effectively as ant colonies do. That’s exactly what a company in Texas set out to accomplish.

The Yellow Brick Road

Charles Harper looked out his office window at the flat landscape south of Houston. As director of national supply and pipeline operations at American Air Liquide, a subsidiary of a $12 billion industrial group based in Paris, he supervised a team monitoring a hundred or so plants producing medical and industrial gases. This was a daunting task on the best of days. The company’s operations were so complex that no two situations ever looked the same.

Air Liquide sold different types of gas to a wide range of customers. Hospitals bought oxygen, as did paper mills and plastics manufacturers. Ice cream makers used liquid nitrogen to freeze their goods. So did berry packers and crawfish shippers. Soft drink companies purchased carbon dioxide to add fizz to their beverages. Oil refineries took several gases, as did steel mills. All told, Air Liquide delivered gas products to more than fifteen thousand customers across the United States, using a fleet of seven hundred trucks, three hundred rail cars, and a 2,200-mile network of pipelines.

All these moving parts, however, were just the beginning of the business problem. The real complexity came from the variables the company had to cope with. The cost of energy, for example, fluctuated constantly. In Texas, where the power industry was deregulated in 2002, the price of electricity changed every fifteen minutes. “For an industrial customer, a megawatt might cost $18 at three a.m., then shoot up to $103 the following afternoon,” Harper says. Since energy was one of Air Liquide’s biggest expenses, accounting for up to 70 percent of the cost of production, these ups and downs had a huge impact on the bottom line.

Other factors affected production costs. Each of the plants producing gaseous or liquid gases had a different efficiency level, different cost profile, and different capacity. Many, for example, could produce either liquid oxygen or liquid nitrogen in varying combinations. For customers who needed delivery by truck, a plant could pump liquid gases into cryogenic trailers. For those on pipelines, it could vaporize the gases and send them that way.

Customer demand was yet another variable. Although some customers, usually the largest ones, took the same amount of gas every week, many others were unpredictable. A small company might order gas only when it got a big contract, then order none for months. About 20 to 30 percent of Air Liquide’s customers made a habit of calling in special requests. “If a big medical center calls us up and says, hey, we need a delivery of oxygen right away, we’re going to make sure they don’t run out,” Harper says. But such requests put a strain on scheduling.

Combine all these factors—fluctuating energy prices, changing production costs, varying delivery modes, and uncertain customer demand—and you’ve got a difficult situation to manage. Sooner or later, something unpredictable, like a mechanical problem at a plant, is going to put you in a bind, and you won’t have enough gas to serve customers in that region. “We were always having incidents like that,” says Clarke Hayes, Air Liquide’s real-time operations manager. “It finally got to the point where we said, you know what, we need a tool that helps us organize better.”

The company already had special-purpose programs to optimize particular aspects of their operations, but it didn’t have a way to pull it all together. In late 1999, a team from Bios Group, a consulting firm from Santa Fe, New Mexico, founded by complexity scientists, came to Air Liquide with an unorthodox proposal. Why not build a computer model based on the self-organizing principles of an ant colony? This model, they suggested, would take into account all the variables that were making planning so difficult as a way to help managers find solutions to day-to-day challenges. As a start, they suggested tackling the company’s truck-routing problem—the question of which truck should pick up gas from which plant and deliver it to which customer to be most profitable for the company. If ants had evolved a clever way to move things from one place to another, they said, why not apply that knowledge to Air Liquide’s trucks?

“The scientists were wonderful to talk to,” Harper says. “But the issue for us was, can they understand the industrial gas business? So we took a small piece of our geography and asked them to digitize that. To show us they understood the complexity of the trucks, the drivers, the depot costs, the miles per gallon, all the anomalies. What if a customer’s tank was on a hill? If you pull up in the wrong direction, or if your truck’s not full, the liquid won’t get in the pump and you can’t fill the tank. So you have to make that customer the first stop on your route. There are hundreds of those kinds of things, and they drive you crazy. But they all needed to be in the model.”

Alberto Donati was one of the scientists at Bios Group assigned to work on the Air Liquide pilot project. Because he had previous experience with ant-based algorithms, he was asked to work on the distribution side of the decision-support system. The approach he took was inspired by the one Marco Dorigo and Eric Bonabeau, another computer scientist, had developed for the traveling salesman problem and similar difficult problems.

“The ant algorithm was a very good choice in this case, because it creates a step-by-step procedure to find the best routing solution,” Donati says. At every step, even the most complex situation could be taken into account. Each ant had a sort of “to do” list that it kept working on until the list was complete, he explained. Let’s say the list was of Air Liquide customers that need deliveries today. “Imagine the ant starts at the depot,” Donati says. “First she has to choose a truck. So she looks at the available list of trucks, and then she picks a driver. So what does she do next? Maybe she goes to the facility to fill up the truck. Now she considers all the possible customers that need that kind of gas. She calculates the time it would take to reach each customer’s site. Perhaps there are some customers with restricted time windows for deliveries, or others with high priority for deliveries. Then the ant looks at each customer using what we call a greedy function.” The term greedy, in this case, refers to a decision-making rule that delivers the best results in a short time frame. “Choose the nearest customer,” for example, is a typical greedy function. “She also takes into account the pheromone trail,” Donati says. “Other ants may have chosen the same path and left some pheromone. So she multiplies the greedy factors by the pheromone factor to determine which customer to choose next.” (This decision is modified by a small degree of randomness to occasionally allow choices that would be hard to predict.) “When she gets to the customer, she unloads the needed amount of the truck’s liquid, keeping track of how much time it takes to do that and how much is left in the truck. Then she goes back to the list of customers she hasn’t visited yet.” And so on, and so on, until all the customers have been visited and assigned to a route.

At this point, the ant computes the quality of the solution and lays down a pheromone trail according to its quality. This process is repeated, ant after ant, thousands of times. “The nice part is that, when you’re near the finish, you will see that the ants have left a clear distribution of pheromones around your system,” Donati says. Each new solution is compared to the best previous one. If it’s better, it becomes the best one. It’s all a matter of balance between exploration and exploitation, he says.

The pilot project was a big hit at Air Liquide, proving to managers that an ant-based model was flexible enough to handle the complexities of their routing problem. But what Air Liquide really wanted was to optimize production, since the cost of producing gas was ten times that of delivering it. So they enlisted Bios Group, which by then had merged with a company called NuTech Solutions, to develop a tool to optimize production. That tool, completed in 2004, is the one Air Liquide uses to guide its business today.

Technicians in the control center run this optimizer every night. They begin at eight p.m. by entering new data about plant schedules, truck availabilities, and customer needs into the model. A telemetry-based system called SCADA (Supervisory Control and Data Acquisition) feeds real-time information about the efficiency of each plant, gas levels in storage tanks, and the cost of power, among other factors. A neural network forecast engine provides estimates of which customers must get deliveries right away, based on telemetry readings and previous customer-usage patterns. Weather forecasts by the hour are entered, as are estimated power costs for the next week. Finally, any miscellaneous information is added that might affect schedules, such as which plants need maintenance in the near future.

The optimizer is then asked to consider every permutation—millions of possible decisions and outcomes—to come up with a plan for the next seven days. To do so, it combines the ant-based algorithm with other problem-solving techniques, weighing which plants should produce how much of which gas. To speed up run times, technicians divide the country into three regions: west of the Rockies, Gulf Coast, and the eastern states. Then they run the model three times for each region. By the time the day crew arrives at work at six a.m., the optimizer has solutions for each region.

People still make all the decisions. But now, at least, they know where they need to go. “One of the scientists called this approach the Yellow Brick Road,” Harper says. “Basically, instead of worrying about the absolute answer, we let the optimizer point us toward the right answer, and by the time we take a few more steps, we rerun the solutions and get the next direction. So we don’t worry about the end point. That’s Oz. We just follow the Yellow Brick Road one step at a time.”

This ant-inspired system has helped Air Liquide reduce its costs dramatically, primarily by making the right gases at the right plants. Exactly how much, company officials are reluctant to say, but one published estimate put the figure at $20 million a year.

“It’s huge,” Harper says. “It’s actually huge.”

Lessons from Checkers

During the 1950s, an electrical engineer at IBM named Arthur Samuel set out to teach a machine to play checkers. The machine was a prototype of the company’s first electronic digital computer called the Defense Calculator, and it was so big it filled a room. By today’s standards, it was a primitive device, but it could execute a hundred thousand instructions a second, and that was all that Samuel needed.

He chose checkers because the game is simple enough for a child to learn, yet complicated enough to challenge an experienced player. What makes checkers fun, after all, is that no two games are likely to be exactly the same. Starting with twelve pieces on each side and thirty-two squares on the game board to choose from (checkers is played only on the dark squares), the number of possible board configurations from start to finish is practically endless. You can play over and over and never repeat the same sequence of moves. This gives checkers what complexity experts call perpetual novelty.

For Samuel’s computer, that was a problem. If every move theoretically could lead to billions of possible configurations of the game board, how could it choose the best one to make? Compiling a comprehensive list of results for each move would simply take too long—just as it would for Marco Dorigo in the traveling salesman problem. So Samuel gave the machine a few basic features to look for. One was called pieces ahead, meaning the computer should count how many pieces it had left on the board and compare that with its opponent’s. Was it two pieces ahead? Three pieces ahead? If a particular move resulted in more pieces ahead, it was likely to be favored. Other features specified favorable regions of the board. Penetrating the opponent’s side was considered advantageous, for example. So was dominating the middle. And so on.

Samuel also taught the computer to learn from its mistakes. If a move based on certain features failed to produce a favorable outcome, then the computer gave less weight to those features the next time around. In addition, he showed the computer how to recognize “stage-setting” moves—those that didn’t help out in an obvious way right now, such as a move that sacrificed a piece, but set up a later move with a bigger payoff, such as a triple jump. The machine did this after the fact by increasing the weight of features that favored the stage-setting move. Finally, he told the computer to assume that its opponent knew everything that it knew so the opponent would inflict the greatest damage possible whenever it could. That forced the machine to factor in potentially negative consequences of moves as well as positive ones. If it got surprised by an opponent anyway, it adjusted the weights to avoid that mistake next time.

Samuel’s project was so successful that the computer was soon beating him on a regular basis. By the end of the 1960s, it was defeating checkers champions.

“All in all, his was a remarkable achievement,” writes John Holland, another pioneer of artificial intelligence, in his book Emergence: From Chaos to Order. “We are nowhere near exploiting these lessons that Samuel put before us almost a half century ago.”

To Holland, who shared a lab with Samuel at IBM, the true genius of the checkers program was the way it modified the weights of a handful of features to cope with the game’s daunting complexity. Because it was impractical at the time to “solve” the game of checkers mathematically by calculating the perfect sequence of moves, as you might do with a simpler game, such as tic-tac-toe, Samuel just wanted his computer to play the game better each time. “The emergence of good play is the objective of Samuel’s study,” Holland wrote.

What Holland meant by emergence was something quite specific. He was referring to the process by which the computer formed a master strategy as a result of individual moves, or, as he put it more generally, the phenomenon of much coming from little. Although everything the program did was “fully reducible to the rules (instructions) that define it,” he says, the behaviors generated by the game were “not easily anticipated from an inspection of those rules.”

We saw the same thing, of course, in Colony 550. Even though individual ants were following simple rules about foraging, their pattern of behavior as a group added up to a surprisingly flexible strategy for the colony as a whole. One colony might tend to be more aggressive in its style of foraging, sending out lots of foragers, while another might be more conservative, keeping them safe inside. Each colony didn’t impose its strategy on the foragers; the strategy emerged from their interactions with one another.

The same could be said about many complex systems, from beehives and flocks of birds to stock markets and the Internet. Whenever you have a multitude of individuals interacting with one another, there often comes a moment when disorder gives way to order and something new emerges: a pattern, a decision, a structure, or a change in direction. This whole chapter, in fact, has been about the kinds of strategies that emerge from self-organized behavior. And what these strategies all have in common is that they represent a way to cope with the unpredictable.

Consider life in an ant colony, where survival means competing not only against other colonies but also against an ever-changing environment. Will there be enough food today? Where will it be found? How will the weather affect the nest? The colony meets such challenges through self-organized behavior, and what emerges is a pattern of activity that allocates the colony’s resources to meet its immediate needs.

Air Liquide, for its part, had its own list of unknowns. Which customers would need deliveries today? What types of gas would they need? Which production facilities could make those gases at the least cost? What would the price of electricity be at those facilities? How could the company deliver those gases most economically? By emulating an ant colony’s distributed problem-solving approach, the company’s optimizer tool provided a day-to-day plan to cope with an endless string of variables.

Like many businesses today, Air Liquide was looking for a way to cope with the perpetual novelty of its environment. The company didn’t expect a guarantee, that it would win every competition it got into, just an opportunity to stay in the game until it could adapt to the latest changes. What it needed, in other words, was a strategy to gain a degree of control over the uncontrollable—which was what Samuel’s checkers player also seemed to promise.

That was quite different, in an important way, from what Deborah Gordon’s ant colonies were trying to do. Instead of attempting to outsmart the desert environment, the ants, in a sense, were matching its complexity with their own. If Colony 550 were to play a game of checkers, each piece on the board would move by itself, acting on local information, with nobody waiting for instructions. The game would be a swirl of motion as pieces moved forward, jumped over one another, became kings, or got taken as prisoners in patterns of interactions that might be difficult to perceive at first glance. But if checkers were as important to ants as foraging, the colony, without doubt, would be a flexible and resilient competitor.

This tension between minimizing uncertainty, on the one hand, and experimenting to keep up with change, on the other, is something we’ll see time and again throughout this book. And what’s surprising about the behavior evolved by bees, birds, and fish, among other species, is the adroit way that groups of such animals manage to have it both ways—to manage complexity and to partake of it at the same time.